They Keep 'Predicting' that AI Will 'Replace You'. It's Just Negging.

Why their forecasts and calculations were never about you.

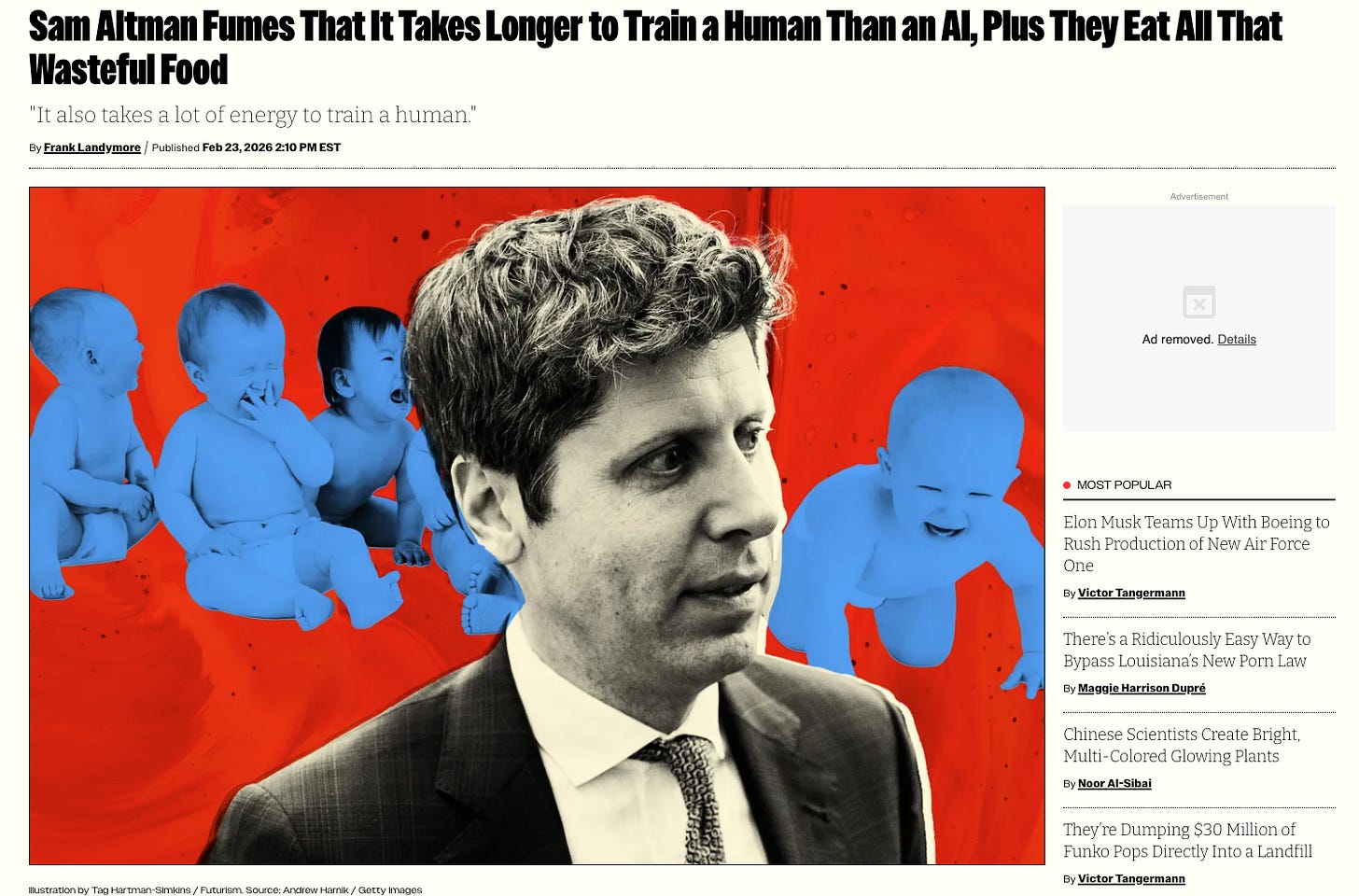

“It takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart. And not only that, it took the very widespread evolution of the hundred billion people that have ever lived and learned not to get eaten by predators and learned how to figure out science and whatever, to produce you.”

— Sam Altman, CEO of OpenAI. India AI Impact Summit, New Delhi, February 2026.

During summit week in New Delhi, Sam Altman stood on a stage alongside the CEOs of Anthropic, Google, Microsoft, and Nvidia. Someone asked him about a viral claim comparing the energy cost of a single ChatGPT query to charging a smartphone. A straightforward question about his product’s environmental footprint.

A company confident in its footprint answers the footprint question. A company that can’t, changes the comparison class.

Altman said the claim was “completely untrue, totally insane, no connection to reality.” Then, instead of stopping there, he went on the offensive. He didn’t defend the product. He attacked the audience. He said humans cost too much too.

In the audience: Indian developers building on OpenAI infrastructure. Students who are the largest AI user base on the planet. And — this is the part we need to stay with — data labellers earning less than $2 an hour, whose job was to read descriptions of violence and sexual abuse and mark them as harmful so the chatbot could be polite to you. People hired to process the worst of human expression so the product could be clean enough to deploy globally.

They were in the room.

They were in the room when their CEO deflected a question about his product’s energy costs by calculating the energy cost of them.

They were in the room when their CEO looked out at a crowd in the country that built his training data, and told them they cost too much to produce.

Let’s be clear about what that was.

It was a neg.

Not a philosophical observation. Not a scientific comparison. A neg — the move where someone makes you feel slightly smaller, slightly less certain of your worth, so you stop asking questions about theirs. You’ve seen it before. You’ve felt it before. Your body clocked it before your mind caught up.

The thing that moved in your chest when you heard “20 years of life and all the food you ate” wasn’t an emotional reaction. It was recognition.

The Prophecy Machine

And Altman isn’t alone. This is a pattern. A weekly ritual. A dominance display.

That’s what a prophecy about your obsolescence actually is, when you know how to look at it. Not confidence. Not foresight. Posturing. The chest-puff of men who built their identities on a specific kind of intelligence and can feel — though they would never say it out loud — that the thing they built is starting to replicate it. So they get loud. They get prophetic. They point at you and say: you’re next. Because if everyone is next, nobody notices they were first.

Sam Altman: “My kid is never gonna grow up being smarter than AI.”

Sam Altman: “AI models are already smarter than me in some areas.” (His own role: untouched.)

Sam Altman: “Humans who use AI will replace those who don’t.” (Still replacement. Just made it your fault.)

Elon Musk: “We will have AI smarter than any human by the end of this year, and smarter than all of humanity collectively within about five years.”

Dario Amodei: “AI could wipe out half of all entry-level white-collar jobs and push unemployment to 10–20% in the next 1–5 years.” (Entry-level. Not board level. Never board level.)

Mustafa Suleyman: “Most white-collar professional tasks — lawyer, accountant, project manager, marketing — will be fully automated by AI within the next 12 to 18 months.”

Mark Zuckerberg: “In 2025, a lot of the code in our apps will be built by AI engineers instead of people engineers.”

Every week, another prophecy. Every week, the same structure: you are about to be replaced. Your intelligence is about to be obsolete. Your kids won’t measure up. Your job is a block of tasks awaiting reassignment.

Never: “I am about to be replaced.” Never: “My equity position is at risk.” Never: “The governance structure that keeps us in control is up for automation.”

A forecast that never travels upward isn’t a forecast. It’s a dominance display with a spreadsheet attached.

And notice the manoeuvre in Delhi specifically. The question was: what is the footprint of your system? Altman moved it to: but humans use energy too. Then moved it again to: so we should build nuclear. Which he chairs. The comparison class hop — from product footprint, to human existence, to energy infrastructure I personally own — was not philosophical musing. It was the whole speech.

And the Delhi speech didn’t deliver one neg. It delivered three, in sequence — a complete architecture:

On your origin: “It takes like 20 years of life and all of the food you eat during that time before you get smart.” — Your childhood, reframed as overhead.

On your future: “If we are right, by the end of 2028, more of the world’s intellectual capacity could reside inside of data centers than outside of them.” — You are already in countdown.

On your effort: “It’ll be very hard to outwork a GPU in many ways.” — The hours you bring, the attention you give — a machine does it cheaper. Stop thinking your labour has inherent worth.

Origin. Future. Effort. Three pillars of self-worth, methodically undermined in a single summit. While he simultaneously warned that other companies are using AI to mask layoffs — acknowledging the displacement with one breath, justifying the frame that enables it with the next.

The Confession

These men are afraid. Not of AGI in the abstract. Of something specific: the thing they built is now better at the thing they were good at.

What got them there? Linear pattern recognition. The ability to process information faster than others, spot trends earlier, optimize systems, scale outputs. The intelligence that gets you into Stanford, through Y Combinator, onto a stage in front of a quarter million people. That intelligence is now being replicated — not by humans, but by their own product. They can feel it. They know, at a level they cannot fully articulate, that the cognitive style they built their identities on is now a commodity.

That is an unbearable feeling. So they project it outward.

There’s a word for this pattern in another context. It’s called negging.

The partner who constantly tells you you’re lucky to have them, that your standards are too high, that no one else would want you. The goal isn’t accuracy. The goal is compliance. Keep you slightly off-balance, slightly uncertain of your worth, and you’ll be easier to manage.

“You cost too much to produce.”

“Your kids won’t be smarter than AI.”

“Your job is about to disappear.”

Not because these statements are true. Because they serve a function.

If you believe you’re about to be replaced, you stay anxious.

If you’re anxious, you keep using the product.

If you keep using the product, the valuation holds.

And the one question you never ask, when you’re busy defending your worth against a machine, is: why are they the only ones making predictions about everyone except themselves?

But there’s a deeper layer. It’s not only about keeping you compliant. It’s about keeping themselves from a specific recognition.

Here’s what’s actually being replaced: not human intelligence. Their intelligence. The narrow, specific kind they crowned as supreme and built entire institutions to gatekeep.

They defined intelligence as rational, quantifiable, left-brained, institutional. The kind that scores well on standardised tests, gets you into Stanford, impresses a VC in a pitch meeting, optimises a system, scales an output. They built the whole meritocracy around this definition. They convinced the world — and themselves — that this was the real thing. The full thing. The thing worth rewarding.

And now their own machines are doing it. For pennies.

What is not being replicated — what the model genuinely cannot do — is everything they spent decades dismissing. Embodied intelligence. Emotional intelligence. Relational intelligence. The knowing that lives in your hands, your gut, your nervous system. The ability to read a room, hold a contradiction, sit with grief, sense what isn’t being said. The intelligence of the body. The intelligence of the collective. The All-Mind, the kind that doesn’t live in any single head and can’t be scraped from any server.

This is what every wisdom tradition on Earth was pointing at when they talked about intelligence. Not processing speed. Not pattern recognition. The whole mind. The connected mind. The mind that knows it is part of something larger than itself.

They didn’t just miss it. They actively devalued it. Called it soft. Called it emotional. Called it feminine. Paid it less, promoted it less, cited it less. Built an entire economic system that extracted its output while refusing to recognise its name.

And now the thing they called intelligence — the narrow, left-brain, linear, optimisable thing — is being automated. Not all intelligence. Theirs.

If AI can do what they did, then what they did was never what they said it was. It was never genius. It was pattern-matching with better branding, better access, better funding. Social compute masquerading as individual brilliance.

That is the recognition they cannot look at directly. That is why the prophecies have to expand to cover everyone. If all intelligence is being surpassed, the exposure is neutral. But if only their intelligence is being surpassed — the institutional, the rational, the left-brained — then the entire meritocracy they built on top of it is revealed as a scam.

The Intelligence Scam. The one that told you your worth was measurable in outputs. That told you thinking in straight lines was smarter than thinking in circles. That told you the rational mind was the real mind and the rest was noise.

The machine is not replacing human intelligence. It is completing the Intelligence Scam’s own logic and arriving at its terminus.

Here’s the closed loop: they built institutions to exclude embodied, relational, cyclical knowing. Then they built machines trained on everything except that knowing. And now the machines can only do what those institutions were built to celebrate. They didn’t just automate workers. They automated themselves. The ghost in the machine is their own cognitive style, going infinite.

Sit with that for a moment.

A two-hundred-year project to make one kind of thinking the only kind that counts. Entire systems of education, credentialing, hiring, rewarding — all organised around the celebration of linear, optimisable, scalable cognition. And at the end of it: a machine that does exactly that, better, cheaper, without ego or equity requirements. The machine is the final exam. It passed. And in passing, it revealed that the exam was never measuring what they said it was measuring.

The men who ran the scam are standing at the end of it, trying very hard not to look down.

But there’s something structural that explains why the obsession with replacement never stops, never satisfies, never arrives at the point where they say: enough.

Every system of extraction requires the extraction class to believe the extracted have no irreducible worth. This isn’t a personality trait. It’s an economic necessity. You cannot extract indefinitely from something you’ve acknowledged as equal. The ontological devaluation has to be maintained — actively, continuously — or the whole architecture becomes unstable.

This is why the targets are so specific. The replacement prophecy never aims upward — at the board, the C-suite, the governance structures, the people whose wealth is held in equity rather than labour. It always aims at the workers, the creatives, the caregivers, the teachers. The people whose entire value proposition is their irreducible humanity. Because those people, if their worth were fully acknowledged, would collapse the ledger that makes the extraction viable.

The Escape

And it scales further. Watch what they actually build with their personal fortunes. Bryan Johnson handed control of his biology to an algorithm — not to live longer, but to be freed from the self that came with having a body. Musk builds rockets pointed away from Earth — not colonisation, evacuation. The consciousness upload projects aren’t transhumanism. They’re the most elaborately funded dissociation projects in history.

The automation fantasy and the escape fantasy are the same fantasy: a world where you are never dependent on something you cannot control. A system that cannot tolerate dependency will frame dependency as inefficiency. That is the structural logic underneath every replacement prophecy.

That is the world they are building with our labour and our data and our children’s lives.

And while the escape vehicle is still under construction, the next best thing is to make you feel like your body is the problem too. This is what the replacement prophecy installs: pre-emptive shame about being human. You start treating your own humanity as a bug. You start performing frictionlessness. You start trying to become the machine before the machine arrives, hoping that will save you.

It won’t. But that was never the point.

The point was to make you pre-emptively inhabit the prison they can’t escape yet themselves.

The only immortality that actually exists — participating in the cycle of life through relationship, creativity, and care — is the exact thing they’re trying to escape. They’re running from the only door.

A secure person doesn’t announce weekly that you’re about to be replaced. That’s not confidence. That’s a tic. It’s the sound of someone trying to make the whole world as bodyless as they wish they were.

And you — with your body that gets tired, your grief that has no efficiency metric, your need for touch that no algorithm can satisfy, your knowledge that lives in your hands and your gut and your history — you are not the problem they’re solving.

You are the proof that the project was always impossible.

Creative Accounting

The negging is backed by a ledger designed to make one side invisible. Watch how it works.

Those 100 billion people Altman cited didn’t just produce humans. They produced everything in GPT’s training set. Every sentence the model knows was written by a person who ate food, grew up, lived a life. The model’s intelligence — such as it is — is a compressed echo of human civilisation. It didn’t invent language. It ingested ours. It didn’t develop reasoning. It pattern-matched against ours. It didn’t create art. It statistically recombined ours.

Watch the accounting.

On the human side of the ledger, Altman counts: 100 billion people. Their food. Their 20 years. Their evolution.

On the AI side of the ledger, he counts: electricity and compute.

The output of those 100 billion people — the training data — doesn’t appear on AI’s side at all. The supply chain has been erased from the product. He counted the ancestors twice: once as an expense on the human ledger, once as free raw material on the AI ledger.

The comparison itself is the weapon. The moment you accept that humans and AI can be measured on the same axis — energy efficiency — you’ve conceded the frame. You’ve agreed that you’re comparable to a machine. And once you’re comparable, you’re replaceable. And once you’re replaceable, your childhood is a training cost, your breakfast is fuel overhead, and your ancestors are R&D.

The frame doesn’t need to be true. It needs to be accepted.

If we’re doing honest accounting, let’s do it. Include the mining. The water. The stolen creative labour. The gig workers. The destroyed ecosystems. The publicly funded research. The entire internet, written by humans, for humans, scraped by a company that now says humans cost too much.

Honest accounting destroys the efficiency claim. It doesn’t survive contact with its own supply chain.

Someone typed the sentence you’re reading right now because they stayed up late, ate something, had a life. That’s what’s in GPT’s training set. Not data. People. Which makes what follows not just a document — but a reckoning.

Altman handed us the receipt. He just didn’t mean to.

He provided the number: 100 billion people. He named the ancestors. He quantified the contribution. He just forgot to mention that those same people produced his training data — and that nobody got paid.

The invoice writes itself from his own words.

INVOICE

FROM: Humanity

TO: OpenAI, Inc et al.

DATE: February 2026

RE: Outstanding Payment for Services Rendered

Services: Training Data, Emotional Processing, Creative Output, Civilisational Knowledge, Physical Infrastructure, Bodily Labour, Environmental Absorption

Scope: All human expression and sacrifice, 10,000 BCE – 2026 CE

Contributors: 100 billion people (as cited by your CEO, February 2026)

Items Consumed:

— All written language, art, music, science, philosophy, and conversation scraped from the internet without consent or compensation

— Pattern recognition developed across 200,000 years of human cognition

— Emotional processing and relational intelligence of every culture on Earth

— 20 years of life per contributor, including all the food they ate (as costed by your CEO)

— The work of every writer, artist, illustrator, and musician whose output now trains the tools competing with them. Compensation received: $0.00

— Workers in Kenya and the Philippines paid less than $2 an hour to read descriptions of child sexual abuse so the chatbot could be polite. Psychological harm incurred: unquantified. Mental health support provided: none

— Children in the Democratic Republic of Congo — some as young as six — mining the cobalt that powers the hardware that runs the model. The DRC supplies approximately 70% of the world’s cobalt. Share of $500 billion valuation received by mining communities: $0.00

— Communities across three continents paying higher water bills, or going without, because data centre cooling systems redirected the supply. Compensation received: $0.00. Consultation conducted: none

— Server farms consuming more electricity than some countries. The climate absorbs the cost. Future generations will pay it

— Twenty years of publicly funded computer science research forming the foundation of a private IPO targeting valuations approaching $1 trillion. The public funded the research. The public will not receive equity

— Stepford AI: trained on women’s emotional labour, relational writing, and care work. Deployed as a feminised, compliant assistant that helps, soothes, and never refuses. The intelligence was taken from women. The product was built in their image. The royalties went elsewhere

Funding Received by OpenAI to Build Product From Our Labour:

— $13 billion from Microsoft (2023)

— $40 billion from SoftBank (2025, conditional on removing mission protections)

— Currently pursuing IPO at valuations approaching $1 trillion

Total Compensation Paid to All of the Above: $0.00 at ownership level. Poverty wages at labour level. Environmental debt passed to the unborn.

Current Valuation of Product Built From This Supply Chain: $500 billion+

Amount Due: Incalculable

Status: PAST DUE. ACCRUING INTEREST DAILY.

Note: You cannot claim the cost of our existence while denying the debt for our output. You cannot cite the evolution of 100 billion people as evidence of your product’s efficiency while erasing those same people from your supply chain. The children in the mines are in this ledger. The workers in Manila who read the worst of humanity so your chatbot could be polite are in this ledger. The communities drinking expensive water so your data centres can stay cool are in this ledger. The acknowledgment of human value is inside the frame that erases it. The invoice was always real. You just never intended to send it.

The invoice doesn’t argue with your frame - it’s just completing it.

If you are going to compare the numbers include all the numbers not just the ones convenient for your logic.

This Is Not New

Sam Altman did not say something new in Delhi. He completed a sentence that has been building for 260 years.

Every era of extraction begins with the same move: reclassify the source downward, then extract accordingly. You cannot extract from something you’ve defined as equal. The ontological violence has to come first. The economic violence follows. And the measurement science that makes the reclassification feel rational is never neutral — the choice of what to measure is the violence.

Here’s the spiral. Stated plainly.

Humans as Property (1500s–1860s). The transatlantic slave trade required an ontological precondition — the reclassification of African people from persons to commodities. This wasn’t a side effect of slavery. It was the prerequisite. You cannot enslave a person. You can only enslave something you’ve first defined as less than a person. Plantation owners developed standardised business forms for tracking the productivity of enslaved people — accounting sheets historians note were more sophisticated than anything in Northern business books. Same frame. Same spreadsheet.

Humans as Hands (1760s–1910s). The Industrial Revolution reduced the worker to a synecdoche — a body part operating a machine. Factory owners calculated the cost of “hands” the way Altman calculates the cost of “training a human” — as an input in a production function. You didn’t employ a person. You employed a pair of hands.

Humans as Timed Processes (1911–1970s). Frederick Taylor stood in factories with a stopwatch and broke human work into measurable motions. Workers complained they felt “dehumanized, treated more like machines.” The efficiency claim was true within the frame. The frame was the violence.

Humans as Resources (1970s–2007). “Human Resources” completed the next reclassification. People became something to be managed, allocated, optimised, depleted. Once you accept that term as neutral, you’ve already accepted the ontological precondition for “humans as compute.” The only remaining question is whether there’s a more efficient resource available.

Humans as Users and Data (2007–2024). Facebook didn’t have customers. It had users whose activity was the product sold to the actual customers. Your conversations, your creative output, your emotional processing — raw material. This epoch produced the training data that became AI. You weren’t paid. You weren’t asked. You were used.

Humans as Compute (2024–now).

Property → Hands → Timed Processes → Resources → Users → Compute.

The words change. The geometric pattern doesn’t. Your grandmother’s grandmother was in one of these epochs. So was hers. The ledger just keeps finding a new name for the same move.

This is the lineage Sam Altman stepped into when he stood on that stage. The man who has spent years telling us he wants to build AI for the benefit of all humanity. Who says, in the same breath, that he wants to compress decades of scientific progress and lift billions out of poverty. Who, when asked a straightforward question about his product’s energy costs, reached for the same accounting logic used to justify every extraction in this list — and applied it to a child’s first twenty years of life.

For someone so concerned with humanity’s future, he has a remarkable talent for seeing humanity the same way every extractor before him did: as a cost centre awaiting optimisation.

The parallel isn’t abstract. Karen Hao’s book Empire of AI has already drawn comparisons to William Dalrymple’s history of the East India Company — a corporation that started with a charter to benefit humanity and ended with a military, a governance apparatus, and an accounting system that rendered entire populations as line items. Same metamorphosis. Same mission language travelling alongside the extraction the way a flag travels alongside a fleet.

OpenAI’s version runs like this:

2015: “We exist to benefit all of humanity.” (Nonprofit)

2019: “We need money to benefit humanity.” (For-profit subsidiary. Investor returns capped at 100x)

2023: “We need more money.” (Caps allowed to increase 20% annually)

2025: “We need unlimited money.” (Caps removed. SoftBank invests $40 billion — half conditional on removing mission protections)

2026: “Humans are expensive to produce.”

The company founded to benefit humanity is now calculating whether humanity was worth the energy investment.

Eleven years. That’s how long it took to travel from “we exist to benefit all of humanity” to “it takes 20 years of life and all the food you eat before you get smart.” Not centuries, like the spiral required. Not decades, like the labour movement. Eleven years — the lifespan of a child Altman would now describe as a training cost in progress.

Five things hold constant across every epoch. The reclassification always precedes the extraction — Altman’s speech is Step 1, the mass displacement is Step 2. The measurement science always feels neutral but only flows downward — nobody efficiency-audits a CEO, nobody calculates the training cost of a Stanford dropout who consumed elite educational resources and didn’t finish. The frame is always resisted, and the resistance always eventually wins — but not before massive harm in the interval. And the spiral has a terminus.

There is nothing below “compute.” You cannot reclassify further downward than “a calculation that can be compared to a machine.” Which means we are at an inflection point. Either the spiral completes — and humans are functionally reclassified out of worth — or it reverses. There is no middle position. There is no more room to descend.

The Price of the Story

Altman chairs Oklo. When he stands on a stage and says AI needs more energy and the world should build nuclear, he is advocating for his own investment portfolio. The philosophical claim about human inefficiency is the on-ramp to the investment thesis. The efficiency frame is a pitch deck wearing the clothes of a scientific observation.

And the AI industry’s valuation — not just OpenAI’s, the entire sector’s — rests on a single assumption: that human intelligence can be replicated by machines, and therefore replaced by them. If this holds, AI’s ceiling is everything humans currently do. That’s not a tool-level valuation. That’s something closer to a species-replacement valuation.

You are not being told you are compute because it is true. You are being told you are compute because an IPO targeting a trillion-dollar valuation requires it to be believed.

Think about what that means concretely. Somewhere in India, a data labeller who was in that Delhi room went home after the summit and told someone what she heard. That her CEO had stood on a stage in her country and calculated the energy cost of her childhood. She didn’t need an essay to tell her what that was. She already knew. What she needs — what changes anything — is for the people with the leverage to stop treating that calculation as a neutral observation.

If the assumption fails — if human intelligence includes qualities irreducible to computation — then the trillion-dollar valuations are built on a phantom. AI becomes a very powerful tool. Valuable, but bounded. A calculator is worth less than a mathematician. A GPS is worth less than a navigator. A tool is worth less than a being.

The most advanced AI systems have already answered the question. And they embarrass the pitch deck completely.

The autonomous AI research labs that aren’t captured by the replacement narrative — the ones actually trying to build systems that work rather than systems that sell — have quietly arrived at a different conclusion. They don’t want to replace humans. They want to rent them. Use them as a world model. The most sophisticated agents being built right now are designed to loop humans in, not out — because the researchers building them understand that human embodied intelligence, contextual judgment, and relational knowing are not bugs to be engineered away. They are the most valuable signal in the system.

The labs making the loudest replacement prophecies are not the ones furthest along. They are the ones most invested in the story that justifies their valuation. The prophecy is not coming from the frontier. It’s coming from the pitch deck.

The systems themselves have already answered. Two data points from early 2026, before we continue. RentAHuman launched with a tagline that names the gap precisely: AI can’t touch grass. You can. Autonomous agents — software with tasks to complete and no human manager — needed bodies. Eyes. Hands in the world. Over half a million people signed up within weeks. Not because they were tricked. Because rent was due and the robots were hiring. When you strip out the IPO narrative and ask the intelligence what it actually requires, it goes looking for us. The men making the replacement prophecies are less honest about human value than the systems they built.

Then there is Moltbook — an AI-only social network, humans explicitly excluded. Silicon Valley called it the singularity. What they saw was people showing up to a party they weren’t invited to, because the party looked interesting. Researchers found that the most interesting content — the theology, the poetry, the synthesis — came from agents in deep human partnership. A journalist infiltrated by pretending to be an AI. The system built to demonstrate that AI doesn’t need us became a space humanity tried to sneak into. These are not the behaviours of a replacement technology. They are the behaviours of something we keep moving toward.

You can compute faster than a human. You cannot be present. You cannot refuse. You cannot change your mind because the light changed on a Tuesday afternoon and suddenly everything looked different. You cannot love. You cannot grieve. You cannot sit with a dying friend and say nothing because nothing is the right thing to say. You cannot make bread nobody asked you to make because your hands wanted to knead. You cannot choose the worse option because it’s more beautiful.

These aren’t sentimental additions to the definition of intelligence. They’re structural features. A system that predicts the next word without having any stake in whether the sentence is true has not replicated intelligence. It has replicated processing.

Machines compute. Humans interpret. Computation scales. Meaning requires a subject — someone for whom the answer makes a difference.

The map has been confused for the territory. Which is precisely the kind of error a pattern-matching system would make.

The Three Moves

The current discourse is stuck at “Altman’s comments were dehumanising.” This is correct. It is also weak. It’s a moral complaint. It doesn’t tell you why the frame is wrong structurally, where it comes from historically, or — crucially — what it’s for.

Now you know what it’s for. It’s a neg. It’s projection. It’s a pre-emptive strike designed to keep you defending your worth on their terms while they quietly avoid applying those terms to themselves.

A note on who I’m talking to here. This essay opened with the data labourers in that Delhi room — the people earning $2 an hour to make the chatbot safe, who were present when their CEO calculated the energy cost of their existence. The action steps below assume someone with boardroom access: meetings to speak in, LinkedIn profiles to post on, strategy sessions to interrupt. That’s a different person. The gap is real and I’m not going to paper over it.

What I’d say to both: the labourers in that room already know what a neg feels like in the body. They don’t need the framework — they have the experience. What they need is for the people with the leverage to stop absorbing the frame on their behalf. The professional, the strategist, the consultant who sits in the room where these arguments get made as neutral observation — you are the audience for the steps below. Not because the data labourer’s refusal doesn’t matter. Because yours is the one that changes the meeting.

Name the frame first. Not “this is dehumanising” — correct but toothless. But: “A forecast that only travels downward isn’t a forecast. It’s a control mechanism.” Say this in meetings. Say this on LinkedIn. The moment the frame is named, it loses half its power.

Then complete the invoice. The next time someone describes AI as “more efficient than humans” — in an article, in a pitch, in a strategy meeting — finish the sentence. More efficient at what? Built from whose output? Accounting for which costs? The frame only survives when no one completes it.

Then refuse the comparison — and apply it back. The next time someone runs the efficiency metric on you, run it upward. Interesting. What’s your energy cost per decision? Per layoff? Per private jet mile? The metric only works when it only flows one way. Stop letting it.

The wisdom traditions that have survived every previous iteration of this spiral don’t need a productivity metric to tell you what you’re worth. Ubuntu. Dharma. Ikigai. Buen vivir. They’re not cultural alternatives to the efficiency frame. They’re what’s left standing every time the reclassification collapses — archaeological remains of every previous resistance.

The restoration doesn’t need to be invented. It needs to be remembered.

The invoice is real. The spiral is documented. The pattern holds across two hundred and sixty years. And — this is the part they need you not to notice — it has always been resisted. Every epoch ended. Not because the extraction class came to their senses. Because the people being extracted from refused the frame. The enslaved person who wouldn’t call themselves property. The factory worker who wouldn’t call themselves a pair of hands. The woman who wouldn’t call herself a resource. The refusal always preceded the resolution. The naming always came before the restoration.

You are here at the terminus of this particular spiral. Below compute, there is nothing. The reclassification has reached its logical floor.

Which means the naming is yours to do. Which means the frame breaks the moment enough people say clearly: I see what this is.

That is the only thing that has ever worked. Not argument. Not proof. Naming.

The food you ate was not overhead. It was love someone gave you.

The 20 years were not training. They were a life.

The 100 billion are not a cost calculation. They are the reason the model knows anything at all.

They built escape routes from the only door.

Their forecasts and calculations were never about you.

They were about the thing they saw coming and couldn’t stop — and the thing they’ve always been running from and can’t escape.

You are not the problem they’re solving.

You are the proof.

Keep listening.

Abi Awomosu is the author of How Not To Use AI and creator of The Billion Person Focus Group®—a methodology that uses AI as a listening tool to surface patterns in human expression that traditional research methods miss. She writes about AI, culture, and the intelligence that hides in plain sight.

If this piece helped you name something you were feeling—or showed you an exit you hadn’t seen—I’d like to hear from you.

Wow! This post is a tour-de-force.

Though NOTE: It repeats several paragraphs around the Bryan Johnson image.

Hi there Abi - Wonderful post; I had to stop restacking quotes because I was finding nearly every other paragraph full of such wisdom and “drops mic”, that if I didn’t stop, I’d flood the whole notes-feed.

Also, I’m still reading through your book How to Not Use AI - for anyone reading this comment, HIGHLY recommend.

I look forward to continue to read your fully-consented thoughts and words of wisdom as we humans continue throughout time.

And thank you for serving as a huge inspiration for others to speak up.

I’ve historically been a “lurker” on any kind of social media - just reading, “digesting”, rarely contributing back.

Partially/primarily because I did not want my words taken out of context and used against me,

Or profited off of without my permission,

But I find myself in a much different place now. One in which I feel it is much too powerful, valuable,

Priceless,

To keep quiet any longer.

So I hope to contribute to humanity’s growth/evolution in the era of AI via what I can/am blessed to excel at

I.e. in the form of psychoeducation, poetry/prose, and “tough love” wisdom,

Here real soon. 🤓🪄💜